Enterprise AI Architecture Explained in Simple Terms

Most companies don’t fail at AI because they can’t build a model. They fail because they can’t run AI reliably at enterprise scale—across teams, systems, data sources, security requirements, and changing business rules.

That’s exactly what enterprise AI architecture is for.

It’s the blueprint that connects your data, AI models, workflows, and business applications in a way that is scalable, secure, and measurable. Think of it as the “operating system” for production AI: not just intelligence, but infrastructure.

In this guide, we’ll explain enterprise AI architecture without heavy jargon, show the core building blocks, and share the practical decisions that determine whether AI stays a pilot or becomes a platform.

What Is Enterprise AI Architecture?

Enterprise AI architecture is the structured design that enables AI systems to operate inside an organization—safely, at scale, and across multiple departments.

It defines how you:

collect and govern data

serve models (LLMs and traditional ML)

evaluate quality and reliability

integrate AI into workflows and business systems

monitor performance, cost, and risk over time

In other words, it’s not “the model.” It’s everything required to make AI useful in real operations.

Why Architecture Matters in Enterprise AI

If AI is only used by a few people in a sandbox, architecture feels optional. Once you move to production, it becomes essential.

Enterprise AI architecture matters because it helps you manage:

Scale: traffic spikes, multiple teams, and many use cases

Security & compliance: access control, audit logs, PII handling

Reliability: predictable behavior, graceful failure, safe fallbacks

Cost control: token usage, caching, routing, observability

Change management: models update, policies change, workflows evolve

A simple mental model: without architecture, you get “AI demos.” With architecture, you get “AI systems.”

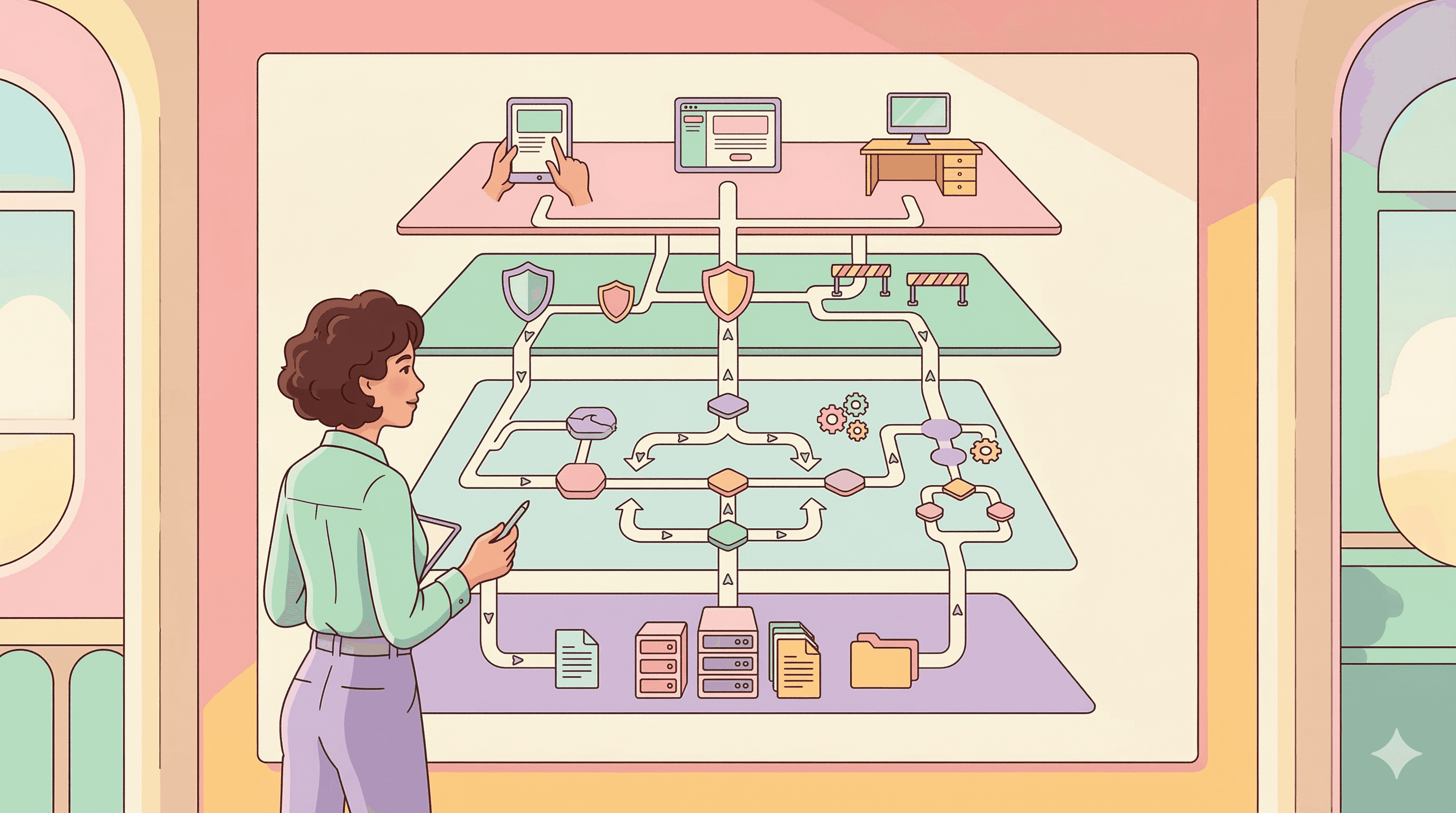

The Core Layers of Enterprise AI Architecture

Different organizations name layers differently, but the building blocks are consistent. Here’s a clean reference view that keeps things simple.

Layer | What it does | Typical components |

|---|---|---|

Data layer | Stores and governs business data | Data warehouse, CRM/ERP data, data lake, access policies |

Knowledge & retrieval layer | Makes internal knowledge usable | Search, RAG pipeline, vector DB, embeddings |

Model layer | Produces predictions or responses | LLMs, ML models, model serving, routing |

Orchestration & decision layer | Chooses what to do next | Workflow engine, decision logic, agent runtime, tool calling |

Integration layer | Connects AI to systems of record | APIs, events/webhooks, connectors, queues |

Governance & safety layer | Keeps AI controlled and compliant | Guardrails, permissions, audit logs, policy enforcement |

Observability & evaluation layer | Measures quality, errors, cost | Monitoring, eval suites, logging, analytics |

Application layer | Where users experience AI | Chat UI, agent apps, internal tools, portals |

This is the “enterprise” part: AI doesn’t live in one box. It runs through layers.

Two Common Enterprise Patterns

Most enterprise deployments fall into one of these patterns (or both).

1) Knowledge-first AI (RAG-powered)

If the AI needs to answer based on internal documentation, policies, product manuals, or case history, you typically need a strong retrieval layer (RAG).

It usually looks like:

User query → retrieve relevant sources → model generates response → citations/controls → publish output

This is the foundation for accurate, “company-aware” AI experiences.

2) Workflow-first AI (Agentic + orchestration)

If the AI must do things—update a CRM record, check eligibility, trigger a process, route a case—then orchestration becomes the center.

It usually looks like:

Intent → context → decision → action (tools/APIs) → verification → audit

This is where enterprise AI starts behaving like an execution system rather than a chatbot.

What “Good” Looks Like: Key Design Principles

A strong enterprise AI architecture usually follows a few non-negotiable principles:

Separation of concerns: retrieval is not the same as generation; governance is not the same as UI

Least privilege access: models and agents should only access what they must

Human-in-the-loop where needed: for high-risk actions, approvals and escalation paths matter

Evaluation-driven development: measure quality before and after changes

Event and API readiness: avoid brittle point-to-point integrations

Observability by default: logs, traces, and cost metrics are part of the system

Notice what’s not here: “pick the fanciest model.” Architecture beats model hype in production environments.

The Governance Side: Security, Safety, and Compliance

Enterprises don’t just ask, “Does it work?” They ask, “Is it safe, compliant, and auditable?”

Your architecture should support:

Data boundaries: what’s allowed into prompts, what must be redacted

Access control: role-based permissions for tools and data sources

Auditability: who asked what, what the system did, what changed

Guardrails: policy checks, sensitive content rules, blocked actions

Fallbacks: safe default behavior when confidence is low or systems fail

A practical approach is to treat AI actions like production transactions: every step should be traceable.

Observability and Evaluation: The Part Teams Forget

Enterprise AI needs measurement—otherwise quality drifts silently.

At minimum, track:

Outcome quality: correctness, helpfulness, resolution rate

Safety quality: policy violations, leakage risk, unsafe outputs

Operational metrics: latency, uptime, error rates, retries

Cost metrics: token usage, model routing, cache hit rate

Escalation metrics: handoff rate, human override reasons

If you’re building AI into operations, “it feels good” is not a metric.

Common Mistakes That Break Enterprise AI

You can avoid a lot of pain by watching for these patterns:

Building UI-first instead of architecture-first (a nice chat window with no system integration)

Ignoring retrieval quality (bad RAG = confident wrong answers)

No governance plan (agents take actions without controls)

No evaluation loop (model updates cause regressions)

Over-automating too early (high-risk actions need phased rollout)

Most enterprise AI issues look like “AI is unreliable,” but the root cause is usually architectural.

A Simple Roadmap to Get Started

If you’re early, don’t try to build everything at once. Start with a stable foundation and expand.

A practical sequence is:

Define your use-case boundaries: what AI can and cannot do

Standardize data access: permissions, sources, and governance

Add retrieval or orchestration (based on need): knowledge-first or workflow-first

Integrate with core systems: APIs, events, ticketing, CRM

Add evaluation + monitoring: measure quality and cost continuously

Scale safely: expand actions, reduce manual steps, add automation depth

This prevents the “pilot trap” lots of demos, little production value.

Frequently Asked Questions

What is enterprise AI architecture?

Enterprise AI architecture is the design that connects data, models, workflows, governance, and business systems so AI can run securely and reliably at scale.

Why is enterprise AI architecture important?

Because production AI requires security, compliance, integrations, monitoring, and cost control—things that don’t matter as much in small pilots.

What are the main layers of enterprise AI architecture?

Most architectures include a data layer, retrieval (RAG) layer, model layer, orchestration/decision layer, integration layer, governance, observability, and application layer.

What is the difference between AI architecture and AI infrastructure?

Architecture is the blueprint (how components work together). Infrastructure is the underlying compute, storage, and networking that runs the components.

Do enterprises always need RAG?

Not always. RAG is essential when responses must be grounded in internal knowledge. For workflow-heavy systems, orchestration may be the priority.

What role does orchestration play in enterprise AI?

Orchestration coordinates steps across tools and systems—deciding what happens next, calling APIs, handling exceptions, and ensuring actions are traceable.

How do you make enterprise AI systems safe?

Use access control, audit logs, policy guardrails, PII handling, human approvals for sensitive actions, and continuous evaluation and monitoring.

How do you measure enterprise AI performance?

Track outcome quality (resolution/correctness), safety quality (violations), operational metrics (latency/errors), and cost metrics (token usage/routing).

What’s the biggest mistake teams make with enterprise AI?

Shipping a chatbot-like UI without governance, integrations, and evaluation—then discovering it can’t scale or be trusted in operations.

Can smaller companies benefit from enterprise AI architecture concepts?

Yes. Even small teams benefit from clear data boundaries, retrieval quality, basic monitoring, and modular design just with simpler tooling.